Securing ICS: NIST 800-82 and VMware vDefend Explained

Executive Summary

NIST Special Publication 800-82 is a foundational guidance document for securing Industrial Control Systems (ICS) and Operational Technology (OT). It provides a framework for protecting critical infrastructure by recommending security controls and architectural principles. VMware’s vDefend is a suite of security products for virtualized environments that, while not exclusively designed for ICS, offers capabilities that directly support and help implement many of the recommendations in NIST 800-82. Let’s explore the key concepts of both and detail how vDefend can be a crucial tool in an organization’s strategy to align with NIST 800-82.

Understanding NIST SP 800-82: Securing Industrial Control Systems

NIST SP 800-82 provides guidance on securing ICS, including Supervisory Control and Data Acquisition (SCADA) systems, Distributed Control Systems (DCS), and other control systems like Programmable Logic Controllers (PLCs). The key objectives of this standard are to:

- Establish a secure operational environment: By recommending robust network architectures, such as the Purdue Model, which emphasizes network segmentation and segregation.

- Restrict access: Implementing strong access controls for both logical and physical access to ICS networks and devices.

- Protect against exploitation: Hardening ICS components and implementing threat detection and monitoring.

- Maintain functionality: Ensuring that security measures do not compromise the high availability and real-time operational requirements of ICS.

- Facilitate incident response: Preparing for and responding to security incidents in a way that minimizes impact on operations.

The latest revision, NIST SP 800-82r3, expands the scope from ICS to the broader category of Operational Technology (OT), reflecting the increasing convergence of IT and OT environments.

Introducing VMware vDefend

VMware vDefend is a suite of security solutions designed to protect workloads in virtualized data centers and private clouds. Its primary components relevant to this discussion are:

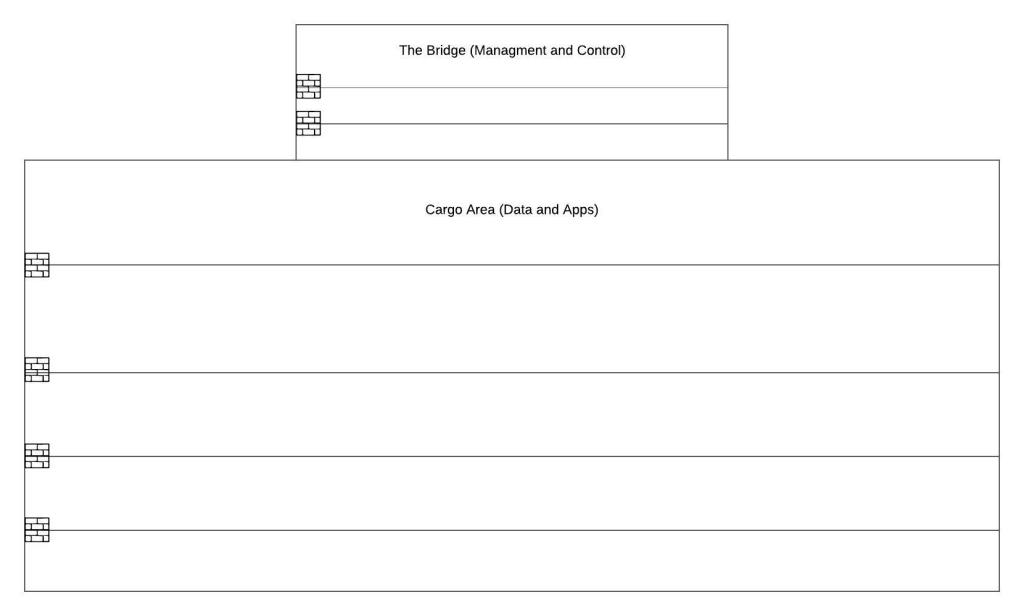

- vDefend Distributed Firewall: A software-defined, Layer 2-7 stateful firewall that enables micro-segmentation. It allows the creation of granular security policies for individual workloads, effectively establishing a “firewall for every virtual machine.” This is particularly powerful for controlling east-west (server-to-server) traffic within a data center. The location of this firewall creates the smallest possible trust zone between the control and workload.

- vDefend Advanced Threat Prevention (ATP): This component adds several advanced security capabilities:

- Intrusion Detection/Prevention System (IDS/IPS): To detect and block known threats and exploits.

- Malware Prevention: To analyze suspicious files in an isolated environment to identify malware.

- Network Traffic Analysis (NTA): To identify anomalous behavior and potential zero-day threats.

- Network Detection and Response (NDR): Combines signals from three key technologies mentioned above.

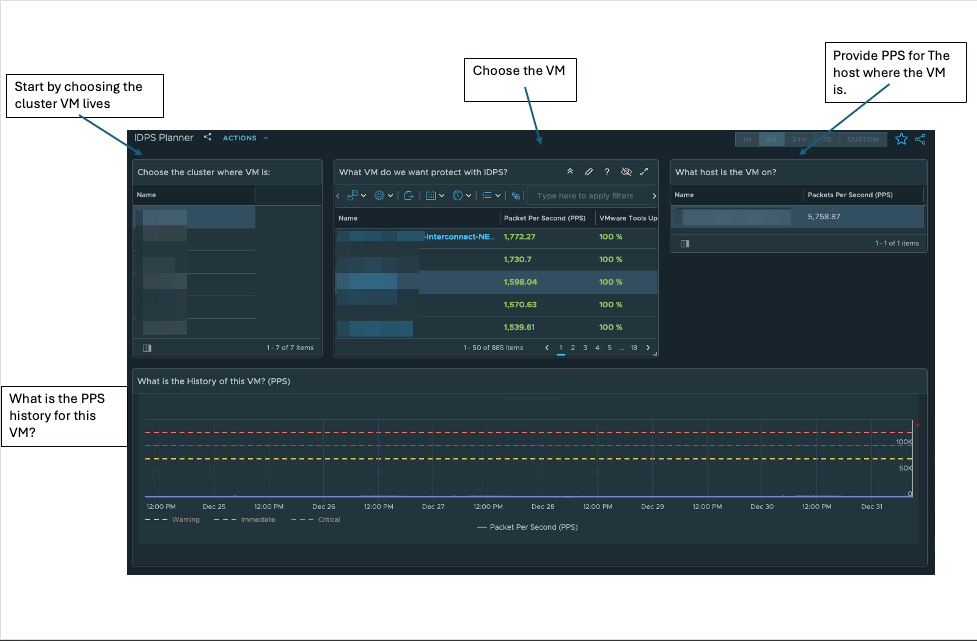

- Security Intelligence: To analyze traffic flows to provide recommendations for micro-segmentation policies, simplifying the process of implementing a zero-trust security model. Helping you answer the question “Who is talking to whom, on what ports, and how often?”

Bridging the Gap: How vDefend Supports NIST 800-82 Compliance

vDefend’s capabilities align well with many of the security controls and principles recommended in NIST 800-82. The following table illustrates this mapping:

| NIST 800-82 Concept/Control | How vDefend Addresses It |

|---|---|

| Network Segmentation & Segregation | vDefend Distributed Firewall is a powerful tool for micro-segmentation. It can create logical security zones around critical ICS applications, even if they reside on the same physical host. This helps enforce the Purdue Model’s concepts of separating IT and OT networks and creating DMZs. |

| Boundary Protection | The vDefend Distributed Firewall can enforce strict access controls at the virtual network interface of each workload, acting as a critical boundary protection mechanism. It can filter traffic based on source, destination, port, and protocol, ensuring that only authorized communication is allowed. |

| Access Control | Through micro-segmentation policies, the vDefend Distributed Firewall enforces the principle of least privilege. It ensures that virtual machines and applications can only communicate with the specific systems they need to, and nothing more. This helps prevent lateral movement of threats. |

| System and Communications Protection | vDefend Advanced Threat Prevention (ATP) provides multiple layers of protection. The IDS/IPS can detect and block attempts to exploit vulnerabilities in ICS software. Malware Prevention can prevent malware from spreading within the ICS environment. |

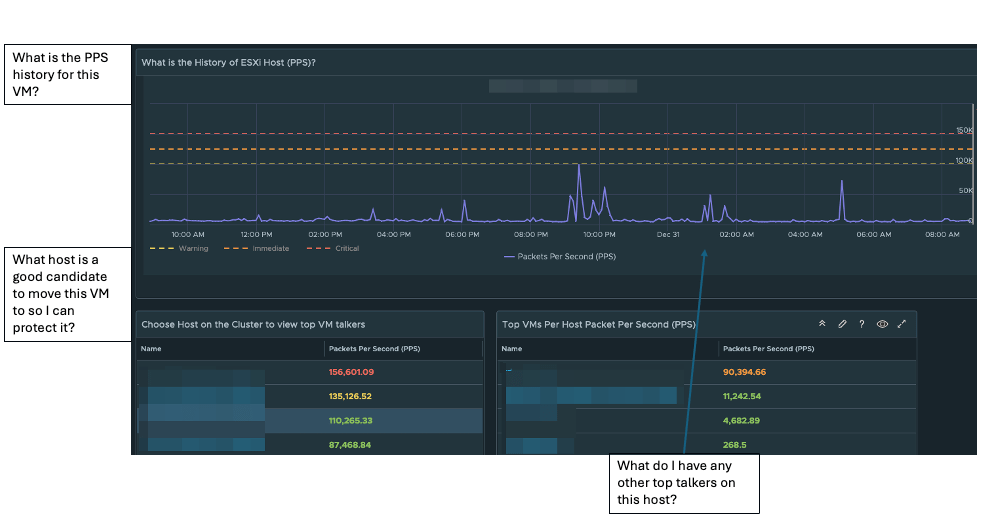

| Continuous Monitoring & Threat Detection | The NTA capabilities of vDefend ATP provide visibility into network traffic, helping to detect anomalous behavior that could indicate a security incident. This supports the need for continuous monitoring in ICS environments. |

| Incident Response | When the NDR system flags a campaign, your security team doesn’t just get an alert; they get the ability to take immediate action. They can apply a quarantine policy using the Distributed Firewall to instantly isolate the compromised workload and stop the attack from spreading further. |

| Virtual Patching | The IDS/IPS in vDefend ATP can be used for “virtual patching.” If a vulnerability is discovered in an ICS application but a patch is not yet available or cannot be immediately applied, the IDS/IPS can be configured to block traffic that attempts to exploit that specific vulnerability. |

Practical Application: A Use-Case Scenario

Consider a manufacturing plant with a virtualized SCADA system. The plant wants to align with NIST 800-82 to improve its cybersecurity posture. Here’s how vDefend could be implemented:

- Asset Discovery and Policy Recommendation: The plant uses vDefend Security Intelligence to discover all the virtualized components of their SCADA system and to analyze the communication patterns between them. Based on this analysis, it recommends micro-segmentation policies.

- Micro-segmentation with the Distributed Firewall: The plant implements the recommended policies using the vDefend Distributed Firewall. This creates a secure perimeter around the entire SCADA system and also creates smaller, more granular security zones around individual components like the HMI, historian, and engineering workstation. This prevents a compromise of one component from easily spreading to others.

- Threat Prevention with ATP: The plant enables vDefend Advanced Threat Prevention. The IDS/IPS is configured with rules to protect against known ICS vulnerabilities. The NTA feature establishes a baseline of normal network behavior and alerts security personnel to any deviations.

- Ongoing Monitoring and Response: The plant’s security team monitors the vDefend NDR for campaigns. If a campaign indicates a potential compromise, they can use the Distributed Firewall to immediately quarantine the affected virtual machine, preventing it from communicating with any other system while they investigate.

Conclusion

While NIST SP 800-82 provides the “what” and “why” of ICS security, VMware’s vDefend suite offers a powerful set of tools to address the “how.” By leveraging vDefend’s capabilities for micro-segmentation, advanced threat prevention, and security intelligence, organizations can effectively implement many of the key security controls recommended in NIST 800-82. This can significantly improve the security posture of their ICS and OT environments, reducing the risk of cyber-attacks and ensuring the continued availability and safety of their critical operations.